SPCL_Bcast(COMM_WORLD)

What: SPCL_Bcast is an open, online seminar series that covers a broad range of topics around parallel and high-performance computing, scalable machine learning, and related areas.

Who: We invite top researchers and engineers from all over the world to speak.

Where: Anyone is welcome to join over Zoom! This link will always redirect to the right Zoom meeting. When possible, we make recordings available on our YouTube channel.

Old talks: See the SPCL_Bcast archive.

Social media: Follow along with #spcl_bcast on Twitter!

When: Every two weeks on Thursdays, at 9AM or 6PM CET.

Fugaku: The First 'Exascale' Supercomputer – Past, Present and Future

Abstract: Fugaku is the first 'exascale' supercomputer of the world, not due to its peak double precision flops, but rather, its demonstrated performance in real applications that were expected of exascale machines on their conceptions 10 years ago, as well as reaching actual exaflops in new breeds of benchmarks such as HPL-AI. But the importance of Fugaku is its "applications first" philosophy under which it was developed, and its resulting mission to be the centerpiece for rapid realization of the so-called Japanese 'Society 5.0' as defined by the Japanese S&T national policy. As such, Fugaku's immense power is directly applicable not only to traditional scientific simulation applications, but can be a target of Society 5.0 applications that encompasses conversion of HPC & AI & Big Data as well as Cyber (IDC & Network) vs. Physical (IoT) space, with immediate societal impact. In fact, Fugaku is already in partial operation a year ahead of schedule, primarily to obtain early Society 5.0 results including combatting COVID-19 as well as resolving other important societal issues. The talk will introduce how Fugaku had been conceived, analyzed, and built over the 10 year period, look at its current efforts regarding Society 5.0 and COVID, as well as touch upon our thoughts on the next generation machine, or "Fugaku NeXT".

Bio: Satoshi Matsuoka is the director of RIKEN R-CCS, the top-tier HPC center in Japan which operates the K Computer and will host its successor Supercomputer Fugaku, and he is a Specially Appointed Professor at Tokyo Tech since 2018. He had been a Full Professor at the Global Scientific Information and Computing Center (GSIC), Tokyo Institute of Technology, since 2000, where he has been the leader of the TSUBAME series of supercomputers that have won many accolades such as world #1 in power-efficient computing. Satoshi Matsuoka also leads various major supercomputing research projects in areas such as parallel algorithms and programming, resilience, green computing, and convergence of Big Data/AI with HPC. He has written over 500 articles, chaired numerous ACM/IEEE conferences, and has won many awards, such as the ACM Gordon Bell Prize in 2011 and the highly prestigious 2014 IEEE-CS Sidney Fernbach Memorial Award.

Bio: Satoshi Matsuoka is the director of RIKEN R-CCS, the top-tier HPC center in Japan which operates the K Computer and will host its successor Supercomputer Fugaku, and he is a Specially Appointed Professor at Tokyo Tech since 2018. He had been a Full Professor at the Global Scientific Information and Computing Center (GSIC), Tokyo Institute of Technology, since 2000, where he has been the leader of the TSUBAME series of supercomputers that have won many accolades such as world #1 in power-efficient computing. Satoshi Matsuoka also leads various major supercomputing research projects in areas such as parallel algorithms and programming, resilience, green computing, and convergence of Big Data/AI with HPC. He has written over 500 articles, chaired numerous ACM/IEEE conferences, and has won many awards, such as the ACM Gordon Bell Prize in 2011 and the highly prestigious 2014 IEEE-CS Sidney Fernbach Memorial Award.Homepage

A Paradigm Shift to Second Order Methods for Machine Learning

Abstract: The amount of compute needed to train modern NN architectures has been doubling every few months. With this trend, it is no longer possible to perform brute force hyperparameter tuning to train the model to good accuracy. However, first-order methods such as Stochastic Gradient Descent are quite sensitive to such hyperparameter tuning and can easily diverge for challenging problems. However, many of these problems can be addressed with second-order optimizers. In this direction, we introduce AdaHessian, a new stochastic optimization algorithm. AdaHessian directly incorporates approximate curvature information from the loss function, and it includes several novel performance-improving features, including: (i) a fast Hutchinson based method to approximate the curvature matrix with low computational overhead; and (ii) a spatial/temporal block diagonal averaging to smooth out variations of second-derivate over different parameters/iterations. Extensive tests on NLP, CV, and recommendation system tasks, show that AdaHessian achieves state-of-the-art results, with 10x less sensitivity to hyperparameter tuning as compared to ADAM.

In particular, we find that AdaHessian:

(i) outperforms AdamW for transformers by 0.13/0.33 BLEU score on IWSLT14/WMT14, 2.7/1.0 PPL on PTB/Wikitext-103;

(ii) outperforms AdamW for SqueezeBert by 0.41 points on GLUE;

(iii) achieves 1.45%/5.55% higher accuracy on ResNet32/ResNet18 on Cifar10/ImageNet as compared to Adam; and

(iv) achieves 0.032% better score than AdaGrad for DLRM on the Criteo Ad Kaggle dataset.

The cost per iteration of AdaHessian is comparable to first-order methods, and AdaHessian exhibits improved robustness towards variations in hyperparameter values.

Bio: Amir Gholami is a senior research fellow at ICSI and Berkeley AI Research (BAIR). He received his PhD from UT Austin, working on large scale 3D image segmentation, a research topic which received UT Austin’s best doctoral dissertation award in 2018. He is a Melosh Medal finalist, the recipient of best student paper award in SC'17, Gold Medal in the ACM Student Research Competition, as well as best student paper finalist in SC’14. Amir is a recognized expert in industry with long lasting contributions. He was part of the Nvidia team that for the first time made FP16 training possible, enabling more than 10x increase in compute power through tensor cores. That technology has been widely adopted in GPUs today. Amir's current research focuses on exa-scale neural network training and efficient inference.

Bio: Amir Gholami is a senior research fellow at ICSI and Berkeley AI Research (BAIR). He received his PhD from UT Austin, working on large scale 3D image segmentation, a research topic which received UT Austin’s best doctoral dissertation award in 2018. He is a Melosh Medal finalist, the recipient of best student paper award in SC'17, Gold Medal in the ACM Student Research Competition, as well as best student paper finalist in SC’14. Amir is a recognized expert in industry with long lasting contributions. He was part of the Nvidia team that for the first time made FP16 training possible, enabling more than 10x increase in compute power through tensor cores. That technology has been widely adopted in GPUs today. Amir's current research focuses on exa-scale neural network training and efficient inference.Homepage

Zhewei Yao is a Ph.D. student in the BAIR, RISELab (former AMPLab), BDD and Math Department at University of California at Berkeley. He is advised by Michael Mahoney, and he is also working very closely with Kurt Keutzer. His research interest lies in computing statistics, optimization, and machine learning. Currently, he is interested in leveraging tools from randomized linear algebra to provide efficient and scalable solutions for large-scale optimization and learning problems. He is also working on the theory and application of deep learning. Before joining UC Berkeley, he received his B.S. in Math from Zhiyuan Honor College at Shanghai Jiao Tong University.

Zhewei Yao is a Ph.D. student in the BAIR, RISELab (former AMPLab), BDD and Math Department at University of California at Berkeley. He is advised by Michael Mahoney, and he is also working very closely with Kurt Keutzer. His research interest lies in computing statistics, optimization, and machine learning. Currently, he is interested in leveraging tools from randomized linear algebra to provide efficient and scalable solutions for large-scale optimization and learning problems. He is also working on the theory and application of deep learning. Before joining UC Berkeley, he received his B.S. in Math from Zhiyuan Honor College at Shanghai Jiao Tong University.Homepage

Distributed Deep Learning with Second Order Information

Abstract: As the scale of deep neural networks continues to increase exponentially, distributed training is becoming an essential tool in deep learning. Especially in the context of un/semi/self-supervised pretraining, larger models tend to achieve much higher accuracy. This trend is especially clear in natural language processing, where the latest GPT-3 model has 175 billion parameters. The training of such models requires hybrid data+model-parallelism. In this talk, I will describe two of our recent efforts; 1) second-order optimization and 2) reducing memory footprint, in the context of large-scale distributed deep learning.

Bio: Rio Yokota is an Associate Professor at the Tokyo Institute of Technology. His research interest lie at the intersection of HPC and ML. On the HPC side, he has worked on hierarchical low-rank approximation methods such as FMM and H-matrices. He has worked on GPU computing since 2007 and won the Gordon Bell prize using the first GPU supercomputer in 2009. On the ML side, he works on distributed deep learning and second-order optimization. His work on training ImageNet in 2 minutes with second-order methods has been extended to various applications using second-order information.

Bio: Rio Yokota is an Associate Professor at the Tokyo Institute of Technology. His research interest lie at the intersection of HPC and ML. On the HPC side, he has worked on hierarchical low-rank approximation methods such as FMM and H-matrices. He has worked on GPU computing since 2007 and won the Gordon Bell prize using the first GPU supercomputer in 2009. On the ML side, he works on distributed deep learning and second-order optimization. His work on training ImageNet in 2 minutes with second-order methods has been extended to various applications using second-order information.Homepage

High Performance Tensor Computations

Abstract: Tensor decompositions, contractions, and tensor networks are prevalent in applications ranging from data modeling to simulation of quantum systems. Numerical kernels within these methods present challenges associated with sparsity, symmetry, and other types of tensor structure. We describe recent innovations in algorithms for tensor contractions and tensor decompositions, which minimize costs and improve scalability. Further, we highlight new libraries for (1) automatic differentiation in the context of high-order tensor optimization, (2) efficient tensor decomposition, and (3) tensor network state simulation. These libraries all build on distributed tensor contraction kernels for sparse and dense tensors provided by the Cyclops library, enabling a shared ecosystem for applications of tensor computations.

Bio: Edgar Solomonik is an assistant professor in the Department of Computer Science at the University of Illinois at Urbana-Champaign. He was previously an ETH Zurich Postdoctoral Fellow at ETH Zurich and did his PhD at the University of California, Berkeley. He has received the DOE Computational Science Graduate Fellowship, ACM/IEEE-CS George Michael Memorial HPC Fellowship, the David J. Sakrison Memorial Prize, the Alston S. Householder Prize, the IEEE-CS TCHPC Award for Excellence for Early Career Researchers in High Performance Computing, the SIAM Activity Group on Supercomputing Early Career Prize, and an NSF CAREER award. His research focuses on high performance numerical linear algebra, tensor computations, and parallel algorithms.

Bio: Edgar Solomonik is an assistant professor in the Department of Computer Science at the University of Illinois at Urbana-Champaign. He was previously an ETH Zurich Postdoctoral Fellow at ETH Zurich and did his PhD at the University of California, Berkeley. He has received the DOE Computational Science Graduate Fellowship, ACM/IEEE-CS George Michael Memorial HPC Fellowship, the David J. Sakrison Memorial Prize, the Alston S. Householder Prize, the IEEE-CS TCHPC Award for Excellence for Early Career Researchers in High Performance Computing, the SIAM Activity Group on Supercomputing Early Career Prize, and an NSF CAREER award. His research focuses on high performance numerical linear algebra, tensor computations, and parallel algorithms.Homepage

Light-Weight Performance Analysis for Next-Generation HPC Systems

Abstract: Building efficient and scalable performance analysis and optimizing tools, for large-scale systems, is increasingly important both for the developers of parallel applications and the designers of next-generation HPC systems. However, conventional performance tools suffer from significant time/space overhead due to the ever-increasing problem size and system scale. On the other hand, the cost of source code analysis is independent of the problem size and system scale, making it very appealing for large-scale performance analysis. Inspired by this observation, we have designed a series of light-weight performance tools for HPC systems, such as memory access monitoring, performance variance detection, and communication compression. In this talk, I will share our expreience on building these tools through combining static analysis and runtime analysis and also point out the main challenges in this direction.

Bio: Jidong Zhai is a Tenured Associate Professor in the Computer Science Department of Tsinghua University. He is a recipient of Siebel Scholar, CCF outstanding doctoral dissertation Award, IEEE TPDS Award for Editorial Excellence, and NSFC Young Career Award. He was a Visiting Professor of Stanford University (2015-2016) and a Visiting Scholar of MSRA (Microsoft Research Asia) in 2013. His research interests include parallel computing, performance evaluation, compiler optimization, and heterogeneous computing. He has published more than 50 papers in prestigious refereed conferences and top journals including SC, PPOPP, ASPLOS, ICS, ATC, MICRO, NSDI, IEEE TPDS, and IEEE TC. His research received a Best Paper Finalist at SC14. He is the advisor of Tsinghua Student Cluster Team. The team led by him has achieved 9 international champions in student supercomputing challenges at SC, ISC, and ASC. In 2015 and 2018, the team led by him swept all three champions at SC, ISC, and ASC. He was a program co-chair of NPC 2018 and a program co-chair of ICPP PASA 2015 workshop. He served or is now serving TPC member of SC, ICS, PPOPP, IPDPS, ICPP, NAS, LCPC, and Euro-Par. He is the general secretary of ACM SIGHPC China. He is currently on the editorial boards of IEEE Transactions on Parallel and Distributed Systems (TPDS), IEEE Transactions on Cloud Computing (TCC), and Journal of Parallel and Distributed Computing.

Bio: Jidong Zhai is a Tenured Associate Professor in the Computer Science Department of Tsinghua University. He is a recipient of Siebel Scholar, CCF outstanding doctoral dissertation Award, IEEE TPDS Award for Editorial Excellence, and NSFC Young Career Award. He was a Visiting Professor of Stanford University (2015-2016) and a Visiting Scholar of MSRA (Microsoft Research Asia) in 2013. His research interests include parallel computing, performance evaluation, compiler optimization, and heterogeneous computing. He has published more than 50 papers in prestigious refereed conferences and top journals including SC, PPOPP, ASPLOS, ICS, ATC, MICRO, NSDI, IEEE TPDS, and IEEE TC. His research received a Best Paper Finalist at SC14. He is the advisor of Tsinghua Student Cluster Team. The team led by him has achieved 9 international champions in student supercomputing challenges at SC, ISC, and ASC. In 2015 and 2018, the team led by him swept all three champions at SC, ISC, and ASC. He was a program co-chair of NPC 2018 and a program co-chair of ICPP PASA 2015 workshop. He served or is now serving TPC member of SC, ICS, PPOPP, IPDPS, ICPP, NAS, LCPC, and Euro-Par. He is the general secretary of ACM SIGHPC China. He is currently on the editorial boards of IEEE Transactions on Parallel and Distributed Systems (TPDS), IEEE Transactions on Cloud Computing (TCC), and Journal of Parallel and Distributed Computing.Homepage

Decomposing MPI Collectives for Exploiting Multi-lane Communication

Abstract: Many modern, high-performance systems increase the cumulated node-bandwidth by offering more than a single communication network and/or by having multiple connections to the network, such that a single processor-core cannot by itself saturate the off-node bandwidth. Efficient algorithms and implementations for collective operations as found in, e.g., MPI, must be explicitly designed for exploiting such multi-lane capabilities. We are interested in gauging to which extent this might be the case.

In the talk, I will illustrate how we systematically decompose the MPI collectives into similar operations that can execute concurrently on and exploit multiple network lanes. Our decomposition is applicable to all standard, regular MPI collectives, and our implementations' performance can be readily compared to the native collectives of any given MPI library. Contrary to expectation, our full-lane, performance guideline implementations in many cases show surprising performance improvements with different MPI libraries on different systems, indicating severe problems with native MPI library implementations. In many cases, our full-lane implementations are large factors faster than the corresponding library MPI collectives. The results indicate considerable room for improvement of the MPI collectives in current MPI libraries including a more efficient use of multi-lane capabilities.

Bio: Jesper Larsson Träff is professor for Parallel Computing at TU Wien (Vienna University of Technology) since 2011. From 2010 to 2011 he was guest professor for Scientific Computing at the University of Vienna. From 1998 until 2010 he was working at the NEC Laboratories Europe in Sankt Augustin, Germany on efficient implementations of MPI for NEC vector supercomputers; this work led to a doctorate (Dr. Scient.) from the University of Copenhagen in 2009. From 1995 to 1998 he spent four years as PostDoc/Research Associate in the Algorithms Group of the Max-Planck Institute for Computer Science in Saarbrücken, and the Efficient Algorithms Group at the Technical University of Munich. He received an M.Sc. in computer science in 1989, and, after two interim years at the industrial research center ECRC in Munich, a Ph.D. in 1995, both from the University of Copenhagen.

Bio: Jesper Larsson Träff is professor for Parallel Computing at TU Wien (Vienna University of Technology) since 2011. From 2010 to 2011 he was guest professor for Scientific Computing at the University of Vienna. From 1998 until 2010 he was working at the NEC Laboratories Europe in Sankt Augustin, Germany on efficient implementations of MPI for NEC vector supercomputers; this work led to a doctorate (Dr. Scient.) from the University of Copenhagen in 2009. From 1995 to 1998 he spent four years as PostDoc/Research Associate in the Algorithms Group of the Max-Planck Institute for Computer Science in Saarbrücken, and the Efficient Algorithms Group at the Technical University of Munich. He received an M.Sc. in computer science in 1989, and, after two interim years at the industrial research center ECRC in Munich, a Ph.D. in 1995, both from the University of Copenhagen.Homepage

Large Graph Processing on Heterogeneous Architectures: Systems, Applications and Beyond

Abstract: Graphs are de facto data structures for many data processing applications, and their volume is ever growing. Many graph processing tasks are computation intensive and/or memory intensive. Therefore, we have witnessed a significant amount of effort in accelerating graph processing tasks with heterogeneous architectures like GPUs, FPGAs and even ASICs. In this talk, we will first review the literatures of large graph processing systems on heterogeneous architectures. Next, we present our research efforts, and demonstrate the significant performance impact of hardware-software co-design on designing high performance graph computation systems and applications. Finally, we outline the research agenda on challenges and opportunities in the system and application development of future graph processing.

Bio: Dr. Bingsheng He is currently an Associate Professor and Vice-Dean (Research) at School of Computing, National University of Singapore. Before that, he was a faculty member in Nanyang Technological University, Singapore (2010-2016), and held a research position in the System Research group of Microsoft Research Asia (2008-2010), where his major research was building high performance cloud computing systems for Microsoft. He got the Bachelor degree in Shanghai Jiao Tong University (1999-2003), and the Ph.D. degree in Hong Kong University of Science & Technology (2003-2008). His current research interests include cloud computing, database systems and high performance computing. His papers are published in prestigious international journals (such as ACM TODS and IEEE TKDE/TPDS/TC) and proceedings (such as ACM SIGMOD, VLDB/PVLDB, ACM/IEEE SuperComputing, ACM HPDC, and ACM SoCC). He has been awarded with the IBM Ph.D. fellowship (2007-2008) and with NVIDIA Academic Partnership (2010-2011). Since 2010, he has (co-)chaired a number of international conferences and workshops, including IEEE CloudCom 2014/2015, BigData Congress 2018 and ICDCS 2020. He has served in editor board of international journals, including IEEE Transactions on Cloud Computing (IEEE TCC), IEEE Transactions on Parallel and Distributed Systems (IEEE TPDS), IEEE Transactions on Knowledge and Data Engineering (TKDE), Springer Journal of Distributed and Parallel Databases (DAPD) and ACM Computing Surveys (CSUR). He has got editorial excellence awards for his service in IEEE TCC and IEEE TPDS in 2019.

Bio: Dr. Bingsheng He is currently an Associate Professor and Vice-Dean (Research) at School of Computing, National University of Singapore. Before that, he was a faculty member in Nanyang Technological University, Singapore (2010-2016), and held a research position in the System Research group of Microsoft Research Asia (2008-2010), where his major research was building high performance cloud computing systems for Microsoft. He got the Bachelor degree in Shanghai Jiao Tong University (1999-2003), and the Ph.D. degree in Hong Kong University of Science & Technology (2003-2008). His current research interests include cloud computing, database systems and high performance computing. His papers are published in prestigious international journals (such as ACM TODS and IEEE TKDE/TPDS/TC) and proceedings (such as ACM SIGMOD, VLDB/PVLDB, ACM/IEEE SuperComputing, ACM HPDC, and ACM SoCC). He has been awarded with the IBM Ph.D. fellowship (2007-2008) and with NVIDIA Academic Partnership (2010-2011). Since 2010, he has (co-)chaired a number of international conferences and workshops, including IEEE CloudCom 2014/2015, BigData Congress 2018 and ICDCS 2020. He has served in editor board of international journals, including IEEE Transactions on Cloud Computing (IEEE TCC), IEEE Transactions on Parallel and Distributed Systems (IEEE TPDS), IEEE Transactions on Knowledge and Data Engineering (TKDE), Springer Journal of Distributed and Parallel Databases (DAPD) and ACM Computing Surveys (CSUR). He has got editorial excellence awards for his service in IEEE TCC and IEEE TPDS in 2019.Homepage

Enabling Rapid COVID-19 Small Molecule Drug Design Through Scalable Deep Learning of Generative Models

Abstract: We improved the quality and reduced the time to produce machine-learned models for use in small molecule antiviral design. Our globally asynchronous multi-level parallel training approach strong scales to all of Sierra with up to 97.7% efficiency. We trained a novel, character-based Wasserstein autoencoder that produces a higher quality model trained on 1.613 billion compounds in 23 minutes while the previous state-of-the-art takes a day on 1 million compounds. Reducing training time from a day to minutes shifts the model creation bottleneck from computer job turnaround time to human innovation time. Our implementation achieves 318 PFLOPS for 17.1% of half-precision peak. We will incorporate this model into our molecular design loop, enabling the generation of more diverse compounds: searching for novel, candidate antiviral drugs improves and reduces the time to synthesize compounds to be tested in the lab.

Bio: Brian Van Essen is the informatics group leader and a computer scientist at the Center for Applied Scientific Computing at Lawrence Livermore National Laboratory (LLNL). He is pursuing research in large-scale deep learning for scientific domains and training deep neural networks using high-performance computing systems. He is the project leader for the Livermore Big Artificial Neural Network open-source deep learning toolkit, and the LLNL lead for the ECP ExaLearn and CANDLE projects. Additionally, he co-leads an effort to map scientific machine learning applications to neural network accelerator co-processors as well as neuromorphic architectures. He joined LLNL in 2010 after earning his Ph.D. and M.S. in computer science and engineering at the University of Washington. He also has an M.S and B.S. in electrical and computer engineering from Carnegie Mellon University.

Bio: Brian Van Essen is the informatics group leader and a computer scientist at the Center for Applied Scientific Computing at Lawrence Livermore National Laboratory (LLNL). He is pursuing research in large-scale deep learning for scientific domains and training deep neural networks using high-performance computing systems. He is the project leader for the Livermore Big Artificial Neural Network open-source deep learning toolkit, and the LLNL lead for the ECP ExaLearn and CANDLE projects. Additionally, he co-leads an effort to map scientific machine learning applications to neural network accelerator co-processors as well as neuromorphic architectures. He joined LLNL in 2010 after earning his Ph.D. and M.S. in computer science and engineering at the University of Washington. He also has an M.S and B.S. in electrical and computer engineering from Carnegie Mellon University.Homepage

Optimizing CESM-HR on Sunway TaihuLight and An Unprecedented Set of Multi-Century Simulations

Abstract: CESM is one of the very first and most complex scientific codes that gets migrated onto Sunway TaihuLight. Being a community code involving hundreds of different dynamic, physics, and chemistry processes, CESM brings severe challenges for the many-core architecture and the parrallel scale of Sunway TaihuLight. This talk summarizes our continuous effort on enabling efficient run of CESM on Sunway, starting from refactoring of CAM in 2015, redesigning of CAM in 2016 and 2017, and a collaborative effort starting in 2018 to enable highly efficient simulations of the high-resolution (25 km atmosphere and 10 km ocean) Community Earth System Model (CESM-HR) on Sunway Taihu-Light. The refactoring and optimizing efforts have improved the simulation speed of CESM-HR from 1 SYPD (simulation years per day) to 5 SYPD (with output disabled). Using CESM-HR, We manage to provide an unprecedented set of high-resolution climate simulations, consisting of a 500-year pre-industrial control simulation and a 250-year historical and future climate simulation from 1850 to 2100. Overall, high-resolution simulations show significant improvements in representing global mean temperature changes, seasonal cycle of sea-surface temperature and mixed layer depth, extreme events and in relationships between extreme events and climate modes.

Bio: Haohuan Fu is a professor in the Ministry of Education Key Laboratory for Earth System Modeling, and Department of Earth System Science in Tsinghua University, where he leads the research group of High Performance Geo-Computing (HPGC). He is also the deputy director of the National Supercomputing Center in Wuxi, leading the research and development division. Fu has a PhD in computing from Imperial College London. His research work focuses on providing both the most efficient simulation platforms and the most intelligent data management and analysis platforms for geoscience applications, leading to two consecutive winning of the ACM Gordon Bell Prizes (nonhydrostatic atmospheric dynamic solver in 2016, and nonlinear earthquake simulation in 2017).

Bio: Haohuan Fu is a professor in the Ministry of Education Key Laboratory for Earth System Modeling, and Department of Earth System Science in Tsinghua University, where he leads the research group of High Performance Geo-Computing (HPGC). He is also the deputy director of the National Supercomputing Center in Wuxi, leading the research and development division. Fu has a PhD in computing from Imperial College London. His research work focuses on providing both the most efficient simulation platforms and the most intelligent data management and analysis platforms for geoscience applications, leading to two consecutive winning of the ACM Gordon Bell Prizes (nonhydrostatic atmospheric dynamic solver in 2016, and nonlinear earthquake simulation in 2017).Evaluating modern programming models using the Parallel Research Kernels

Abstract: The Parallel Research Kernels were developed to support empirical studies of programming models in a variety of contexts without the porting effort required by proxy or mini-applications. I will describe the project and why it has been a useful tool in a variety of contexts and present some of our findings related to modern C++ parallelism for CPU and GPU architectures.

Bio: Jeff Hammond is a Principal Engineer at Intel where he works on a wide range of high-performance computing topics, including parallel programming models, system architecture and open-source software. Previously, Jeff worked at the Argonne Leadership Computing Facility where he worked on Blue Gene and built things with MPI. Jeff received his PhD in Physical Chemistry from the University of Chicago for research performed in collaboration with the NWChem team at Pacific Northwest National Laboratory.

Bio: Jeff Hammond is a Principal Engineer at Intel where he works on a wide range of high-performance computing topics, including parallel programming models, system architecture and open-source software. Previously, Jeff worked at the Argonne Leadership Computing Facility where he worked on Blue Gene and built things with MPI. Jeff received his PhD in Physical Chemistry from the University of Chicago for research performed in collaboration with the NWChem team at Pacific Northwest National Laboratory.Homepage

High-Performance Sparse Tensor Operations in HiParTI Library

Abstract: This talk will present the recent development of HiParTI, a Hierarchical Parallel Tensor Infrastructure. I will emphasize on the element-wise sparse tensor contractions, commonly shown in quantum chemistry, physics, and others. We introduce three optimization techniques by using multi-dimensional, efficient hashtable representation for the accumulator and larger input tensor, and all-stage parallelization. Evaluating with 15 datasets, we obtain 28 - 576x speedup over the traditional sparse tensor contraction. With our proposed algorithm- and memory heterogeneity-aware data management, extra performance improvement is achieved on the heterogeneous memory with DRAM and Intel Optane DC Persistent Memory Module (PMM) over a state-of-the-art solutions.

Bio: Jiajia Li is a research scientist in High Performance Computing group at Pacific Northwest National Laboratory (PNNL). She has received her Ph.D. degree from Georgia Institute of Technology in 2018. Her current research emphasizes on optimizing tensor methods especially for sparse data from diverse applications by utilizing various parallel architectures. She is an awardee of Best Student Paper Award at SC'18, Best Paper Finalist at PPoPP'19, and "A Rising Star in Computational and Data Sciences". She has served on the technical program committee of conferences/journals, such as PPoPP, SC, ICS, IPDPS, ICPP, LCTES, Cluster, ICDCS, TPDS, etc. In the past, she had received a Ph.D. degree from Institute of Computing Technology at Chinese Academy of Sciences, China and a B.S. degree in Computational Mathematics from Dalian University of Technology, China.

Bio: Jiajia Li is a research scientist in High Performance Computing group at Pacific Northwest National Laboratory (PNNL). She has received her Ph.D. degree from Georgia Institute of Technology in 2018. Her current research emphasizes on optimizing tensor methods especially for sparse data from diverse applications by utilizing various parallel architectures. She is an awardee of Best Student Paper Award at SC'18, Best Paper Finalist at PPoPP'19, and "A Rising Star in Computational and Data Sciences". She has served on the technical program committee of conferences/journals, such as PPoPP, SC, ICS, IPDPS, ICPP, LCTES, Cluster, ICDCS, TPDS, etc. In the past, she had received a Ph.D. degree from Institute of Computing Technology at Chinese Academy of Sciences, China and a B.S. degree in Computational Mathematics from Dalian University of Technology, China.Homepage

HPHPC: High Productivity High Performance Computing with Legion and Legate

Abstract: This talk will describe the co-design and implementation of Legion and Legate, two programming systems that synergistically combine to provide to high productivity high performance computing ecosystem. In the first part of the talk, we'll introduce Legion, a task-based runtime system for supercomputers with a strong data model that enables a sophisticated dependence analysis. The second part of the talk will cover Legate, a framework for constructing drop-in replacements for popular Python libraries such as NumPy and Pandas on top of Legion. We'll show how using Legate and Legion together allows users to run unmodified Python programs at scale on hundreds of GPUs simply by changing a few import statements. We'll also discuss how the Legate framework makes it possible to compose such libraries even in distributed settings.

Homepage

Performance Engineering for Sparse Matrix-Vector Multiplication: Some new ideas for old problems

Abstract: The sparse matrix-vector multiplication (SpMV) kernel is a key performance component of numerous algorithms in computational science. Despite the kernel's apparent simplicity, the sparse and potentially irregular data access patterns of SpMV and its intrinsically low computational intensity haven been challenging the development of high-performance implementations over decades. Still these developments are rarely guided by appropriate performance models.

This talk will address the basic problem of understanding (i.e., modelling) and improving the computational intensity of SpMV kernels with a focus on symmetric matrices. Using a recursive algebraic coloring (RACE) of the underlying undirected graph, a node-level parallel symmetric SpMV implementation is developed which increases the computational intensity and the performance for a large general set of matrices by a factor of up to 2x. The same idea is then applied to accelerate the computation sparse matrix powers via cache blocking.

Bio: Gerhard Wellein is a Professor for High Performance Computing at the Department for Computer Science at the University of Erlangen-Nuremberg and holds a PhD in theoretical physics from the University of Bayreuth. Since 2001 he heads the Erlangen National Center for High Performance Computing, he is the deputy speaker of the Bavarian HPC network KONWIHR and he is member of the scientific steering committee of the Gauss-Centre for Supercomputing (GCS).

Bio: Gerhard Wellein is a Professor for High Performance Computing at the Department for Computer Science at the University of Erlangen-Nuremberg and holds a PhD in theoretical physics from the University of Bayreuth. Since 2001 he heads the Erlangen National Center for High Performance Computing, he is the deputy speaker of the Bavarian HPC network KONWIHR and he is member of the scientific steering committee of the Gauss-Centre for Supercomputing (GCS).Gerhard Wellein has more than twenty years of experience in teaching HPC techniques to students and scientists from computational science and engineering, is an external trainer in the Partnership for Advanced Computing in Europe (PRACE) and received the "2011 Informatics Europe Curriculum Best Practices Award" (together with Jan Treibig and Georg Hager) for outstanding teaching contributions. His research interests focus on performance modelling and performance engineering, architecture-specific code optimization, novel parallelization approaches and hardware-efficient building blocks for sparse linear algebra and stencil solvers. He has been conducting and leading numerous HPC projects including the German Japanese project "Equipping Sparse Solvers for Exascale" (ESSEX) within the DFG priority program SPPEXA ("Software for Exascale Computing").

Homepage

Cloud-Scale Inference on FPGAs at Microsoft Bing

Abstract: Microsoft's Project Catapult began nearly a decade ago, leading to the widespread deployment of FPGAs in Microsoft's data centers for application and network acceleration. Project Brainwave began five years later, applying those FPGAs to accelerate DNN inference for Bing and later other Microsoft cloud services. FPGA flexibility has enabled the Brainwave architecture to evolve rapidly, keeping pace with rapid developments in the DNN model space. The low cost of updating FPGA-based designs also enables greater risk taking, facilitating innovations such as our Microsoft Floating Point (MSFP) data format. FPGAs with hardened support for MSFP will provide a new level of performance for Brainwave. These AI-optimized FPGAs also introduce a new point in the hardware spectrum between general-purpose devices and domain-specific accelerators. Going forward, a key challenge for accelerator architects will be finding the right balance between hardware specialization, hardware configurability, and software programmability.

Bio: Steven K. Reinhardt is a Partner Hardware Engineering Manager in the Bing Platform Engineering group. His team leads the development and production deployment of the Brainwave FPGA-based DNN inference accelerator in support of Bing and Office 365. Prior to joining Microsoft, Steve was a Senior Fellow at AMD Research, where he led research on heterogeneous systems and high-performance networking. Before that, he was an Associate Professor in the EECS department at the University of Michigan. Steve has published over 50 refereed conference and journal articles. He was also a primary architect and developer of M5 (now gem5), a widely used open-source full-system architecture simulator. Steve received a Ph.D. in Computer Sciences from the University of Wisconsin-Madison, and is an IEEE Fellow and an ACM Distinguished Scientist.

Bio: Steven K. Reinhardt is a Partner Hardware Engineering Manager in the Bing Platform Engineering group. His team leads the development and production deployment of the Brainwave FPGA-based DNN inference accelerator in support of Bing and Office 365. Prior to joining Microsoft, Steve was a Senior Fellow at AMD Research, where he led research on heterogeneous systems and high-performance networking. Before that, he was an Associate Professor in the EECS department at the University of Michigan. Steve has published over 50 refereed conference and journal articles. He was also a primary architect and developer of M5 (now gem5), a widely used open-source full-system architecture simulator. Steve received a Ph.D. in Computer Sciences from the University of Wisconsin-Madison, and is an IEEE Fellow and an ACM Distinguished Scientist.Homepage

Inspecting Irregular Computation Patterns to Generate Fast Code

Abstract: Sparse matrix methods are at the heart of many scientific computations and data analytics codes. Sparse matrix kernels often dominate the overall execution time of many simulations. Further, the indirection from indexing and looping over the nonzero elements of a sparse data structure often limits the optimization of such codes. In this talk, I will introduce Sympiler, a domain-specific code generator that transforms computation patterns in sparse matrix methods for high-performance. Specifically, I will show how decoupling symbolic analysis from numerical manipulation will enable the automatic optimization of sparse codes. I will also demonstrate the application of symbolic analysis in accelerating quadratic program solvers.

Bio: Maryam Mehri Dehnavi is an Assistant Professor in the Computer Science department at the University of Toronto and is the Canada Research Chair in parallel and distributed computing. Her research focuses on high-performance computing and domain-specific compiler design. Previously, she was an Assistant Professor at Rutgers University and a postdoctoral researcher at MIT. She received her Ph.D. from McGill University in 2013. Some of her recognitions include the Canada Research Chair award, the Ontario Early Researcher award, and the ACM SRC grand finale prize.

Bio: Maryam Mehri Dehnavi is an Assistant Professor in the Computer Science department at the University of Toronto and is the Canada Research Chair in parallel and distributed computing. Her research focuses on high-performance computing and domain-specific compiler design. Previously, she was an Assistant Professor at Rutgers University and a postdoctoral researcher at MIT. She received her Ph.D. from McGill University in 2013. Some of her recognitions include the Canada Research Chair award, the Ontario Early Researcher award, and the ACM SRC grand finale prize.Homepage

Transferable Deep Learning Surrogates for Solving PDEs

Abstract: Partial differential equations (PDEs) are ubiquitous in science and engineering to model physical phenomena. Notable PDEs are the Laplace and Navier-Stokes equations with numerous applications in fluid dynamics, electrostatics, and steady-state heat transfer. Solving such PDEs relies on numerical methods such as finite element, finite difference, and finite volume. While these methods are extremely powerful, they are also computationally expensive. Despite widespread efforts to improve the performance and scalability of solving these systems of PDEs, several problems remain intractable.

In this talk, we'll explore the potential of deep learning (DL)-based surrogates to both augment and replace numerical simulations. In the first part of the talk, we'll present two frameworks -- CFDNet and SURFNet, that couple simulations with a convolutional neural network to accelerate the convergence of the overall scheme without relaxing the convergence constraints of the physics solver. The second part of the talk will introduce another novel framework that leverages DL to build a transferable deep neural network surrogate that solves PDEs in unseen domains with arbitrary boundary conditions. We'll show that a DL model trained only once can be used forever without re-training to solve PDEs in large and complex domains with unseen sizes, shapes, and boundary conditions. Compared with the state-of-the-art physics-informed neural networks for solving PDEs, we demonstrate 1-3 orders of magnitude speedups while achieving comparable or better accuracy.

Bio: Aparna Chandramowlishwaran is an Associate Professor at the University of California, Irvine, in the Department of Electrical Engineering and Computer Science. She received her Ph.D. in Computational Science and Engineering from Georgia Tech in 2013 and was a research scientist at MIT prior to joining UCI as an Assistant Professor in 2015. Her research lab, HPC Forge, aims at advancing computational science using high-performance computing and machine learning. She currently serves as the associate editor of the ACM Transactions on Parallel Computing.

Bio: Aparna Chandramowlishwaran is an Associate Professor at the University of California, Irvine, in the Department of Electrical Engineering and Computer Science. She received her Ph.D. in Computational Science and Engineering from Georgia Tech in 2013 and was a research scientist at MIT prior to joining UCI as an Assistant Professor in 2015. Her research lab, HPC Forge, aims at advancing computational science using high-performance computing and machine learning. She currently serves as the associate editor of the ACM Transactions on Parallel Computing.Homepage

Exploring Tools & Techniques for the Frontier Exascale System: Challenges vs Opportunities

Abstract: PIConGPU, an extremely scalable, heterogeneous, fully relativistic particle-in-cell (PIC) C++ code provides a modern simulation framework for laser-plasma physics and laser-matter interactions suitable for production-quality runs on large scale systems. This plasma physics application is fueled by alpaka abstraction library and incorporates openPMD-API enabling I/O libraries such as ADIOS2. Among many supercomputing systems, PIConGPU has been running on ORNL's Titan, Summit and is expected to run on the Exascale system, Frontier, that is being built as we speak. This talk will discuss some of the challenges, opportunities and potential solutions with respect to maintaining a performant portable code while migrating the same to Frontier.

Bio: Sunita Chandrasekaran is an Assistant Professor with the Dept. of Computer and Information Sciences at the University of Delaware, USA. Her research interests span High Performance Computing, interdisciplinary science, machine learning and data science. Chandrasekaran has organized and served in the TPC of several conferences and workshops including SC, ISC, IPDPS, IEEE Cluster, CCGrid and WACCPD. She is currently an associated and subject area editor for IEEE TPDS, Elsevier's PARCO, FGCS and JPDC. She is a recipient of the 2016 IEEE-CS TCHPC Award for Excellence for Early Career Researchers in HPC. She received her Ph.D. in 2012 on Tools and Algorithms for High-Level Algorithm Mapping to FPGAs from the School of Computer Science and Engineering, Nanyang Technological University, Singapore.

Bio: Sunita Chandrasekaran is an Assistant Professor with the Dept. of Computer and Information Sciences at the University of Delaware, USA. Her research interests span High Performance Computing, interdisciplinary science, machine learning and data science. Chandrasekaran has organized and served in the TPC of several conferences and workshops including SC, ISC, IPDPS, IEEE Cluster, CCGrid and WACCPD. She is currently an associated and subject area editor for IEEE TPDS, Elsevier's PARCO, FGCS and JPDC. She is a recipient of the 2016 IEEE-CS TCHPC Award for Excellence for Early Career Researchers in HPC. She received her Ph.D. in 2012 on Tools and Algorithms for High-Level Algorithm Mapping to FPGAs from the School of Computer Science and Engineering, Nanyang Technological University, Singapore.Homepage

High Performance Computing: Beyond Moore's Law

Abstract: Supercomputer performance now exceeds that of the earliest computers by thirteen orders of magnitude, yet science still needs more than they provide. But with Dennard scaling and Moore's Law ending even as AI and HPC demand continued growth. Demand engenders supply, and ways to prolong the growth in supercomputing performance are at hand or on the horizon. Architectural specialization has returned, after a loss of system diversity in the Moore's law era; it provides a significant boost for computational science. And at the hardware level, the development by Cerebras of a viable wafer-scale compute platform has important ramifications. Other long-term possibilities, notably quantum computing, may eventually play a role.

Why wafer-scale? Real achieved performance in supercomputers (as opposed to the peak speed) is limited by the bandwidth and latency barriers --- memory and communication walls --- that impose delay when off-processor-chip data is needed, and it is needed all the time. By changing the scale of the chip by two orders of magnitude, we can pack a small, powerful, mini-supercomputer on one piece of silicon, and eliminate much of the off-chip traffic for applications that can fit in the available memory. The elimination of most off-chip communication also cuts the power per unit performance, a key parameter when total system power is capped, as it usually is.

Cerebras overcame technical problems concerning yield, packaging, cooling, and delivery of electrical power in order to make wafer-scale computing viable. The Cerebras second generation wafer has over 800,000 identical processing elements architected with features that support sparsity and power-efficient performance. For ML, algorithmic innovations such as conditional computations and model and data sparsity promise significant savings in memory and computation while preserving model capacity. Flexible hardware rather than dense matrix multiply is required to best exploit these algorithmic innovations. We will discuss the aspects of the architecture that meet that requirement.

Bio: Rob Schreiber is a Distinguished Engineer at Cerebras Systems, Inc., where he works on architecture and programming of highly parallel systems for AI and science. Before Cerebras he taught at Stanford and RPI and worked at NASA, at startups, and at HP. Schreiber's research spans sequential and parallel algorithms for matrix computation, compiler optimization for parallel languages, and high performance computer design. With Moler and Gilbert, he developed the sparse matrix extension of Matlab. He created the NAS CG parallel benchmark. He was a designer of the High Performance Fortran language. Rob led the development at HP of a system for synthesis of custom hardware accelerators. He has help pioneer the exploitation of photonic signaling in processors and networks. He is an ACM Fellow, a SIAM Fellow, and was awarded, in 2012, the Career Prize from the SIAM Activity Group in Supercomputing.

Bio: Rob Schreiber is a Distinguished Engineer at Cerebras Systems, Inc., where he works on architecture and programming of highly parallel systems for AI and science. Before Cerebras he taught at Stanford and RPI and worked at NASA, at startups, and at HP. Schreiber's research spans sequential and parallel algorithms for matrix computation, compiler optimization for parallel languages, and high performance computer design. With Moler and Gilbert, he developed the sparse matrix extension of Matlab. He created the NAS CG parallel benchmark. He was a designer of the High Performance Fortran language. Rob led the development at HP of a system for synthesis of custom hardware accelerators. He has help pioneer the exploitation of photonic signaling in processors and networks. He is an ACM Fellow, a SIAM Fellow, and was awarded, in 2012, the Career Prize from the SIAM Activity Group in Supercomputing.

Bio: Natalia Vasilieva is Director of Product, Machine Learning at Cerebras Systems, where she leads market, application, and algorithm analysis for ML use cases. She was a Senior Research Manager at HP Labs, where she led the Software and AI group, worked on performance characterization and modelling of deep learning workloads, fast Monte Carlo simulations, and systems software, programming paradigms, algorithms and applications for The HP memory-driven computing project. She was an associate professor at Saint Petersburg State University and a lecturer at the Saint Petersburg Computer Science Center, and holds a PhD in mathematics, computer science, and information technology from Saint Petersburg State University.

Bio: Natalia Vasilieva is Director of Product, Machine Learning at Cerebras Systems, where she leads market, application, and algorithm analysis for ML use cases. She was a Senior Research Manager at HP Labs, where she led the Software and AI group, worked on performance characterization and modelling of deep learning workloads, fast Monte Carlo simulations, and systems software, programming paradigms, algorithms and applications for The HP memory-driven computing project. She was an associate professor at Saint Petersburg State University and a lecturer at the Saint Petersburg Computer Science Center, and holds a PhD in mathematics, computer science, and information technology from Saint Petersburg State University.Homepage

Heterogeneous System Architectures: A Strategy to Use Diverse Components

Abstract: Current system architectures rely on a simple approach: one compute node design that is used across the entire system. This approach only supports heterogeneity at the node level. Compute nodes may involve a variety of devices but the system is otherwise homogeneous. This design simplifies scheduling applications and provides consistent expectations for the hardware that a job can exploit but often results in poor utilization of components. The wide range of emerging devices for AI and other domains necessitates a more heterogeneous system architecture that varies the compute node (or volume) types within a single job.

Lawrence Livermore National Laboratory (LLNL) is currently exploring such heterogeneous system architectures. These explorations include the use of novel hardware to accelerate AI models within larger applications and initial software solutions to overcome the challenges posed by heterogeneous system architectures. This talk will present a sampling of the novel software solutions that enable the heterogeneous system architecture as well as the systems that LLNL has currently deployed.

Bio: As Chief Technology Officer (CTO) for Livermore Computing (LC) at Lawrence Livermore National Laboratory (LLNL), Bronis R. de Supinski formulates LLNL's large-scale computing strategy and oversees its implementation. He frequently interacts with supercomputing leaders and oversees many collaborations with industry and academia. Previously, Bronis led several research projects in LLNL's Center for Applied Scientific Computing. He earned his Ph.D. in Computer Science from the University of Virginia in 1998 and he joined LLNL in July 1998. In addition to his work with LLNL, Bronis is also a Professor of Exascale Computing at Queen's University of Belfast and an Adjunct Associate Professor in the Department of Computer Science and Engineering at Texas A&M University. Throughout his career, Bronis has won several awards, including the prestigious Gordon Bell Prize in 2005 and 2006, as well as two R&D 100s, including one for his leadership in the development of a novel scalable debugging tool.

Bio: As Chief Technology Officer (CTO) for Livermore Computing (LC) at Lawrence Livermore National Laboratory (LLNL), Bronis R. de Supinski formulates LLNL's large-scale computing strategy and oversees its implementation. He frequently interacts with supercomputing leaders and oversees many collaborations with industry and academia. Previously, Bronis led several research projects in LLNL's Center for Applied Scientific Computing. He earned his Ph.D. in Computer Science from the University of Virginia in 1998 and he joined LLNL in July 1998. In addition to his work with LLNL, Bronis is also a Professor of Exascale Computing at Queen's University of Belfast and an Adjunct Associate Professor in the Department of Computer Science and Engineering at Texas A&M University. Throughout his career, Bronis has won several awards, including the prestigious Gordon Bell Prize in 2005 and 2006, as well as two R&D 100s, including one for his leadership in the development of a novel scalable debugging tool.Homepage

TinyML and Efficient Deep Learning

Abstract: Today's AI is too big. Deep neural networks demand extraordinary levels of data and computation, and therefore power, for training and inference. This severely limits the practical deployment of AI in edge devices. We aim to improve the efficiency of neural network design. First, I'll present MCUNet that brings deep learning to IoT devices. MCUNet is a framework that jointly designs the efficient neural architecture (TinyNAS) and the light-weight inference engine (TinyEngine), enabling ImageNet-scale inference on micro-controllers that have only 1MB of Flash. Next I will introduce Once-for-All Network, an efficient neural architecture search approach, that can elastically grow and shrink the model capacity according to the target hardware resource and latency constraints. From inference to training, I'll present TinyTL that enables tiny transfer learning on-device, reducing the memory footprint by 7-13x. Finally, I will describe data-efficient GAN training techniques that can generate photo-realistic images using only 100 images, which used to require tens of thousands of images. We hope such TinyML techniques can make AI greener, faster, more efficient and more sustainable.

Bio: Song Han is an assistant professor at MIT's EECS. He received his PhD degree from Stanford University. His research focuses on efficient deep learning computing. He proposed "deep compression" technique that can reduce neural network size by an order of magnitude without losing accuracy, and the hardware implementation "efficient inference engine" that first exploited pruning and weight sparsity in deep learning accelerators. His team's work on hardware-aware neural architecture search that bring deep learning to IoT devices was highlighted by MIT News, Wired, Qualcomm News, VentureBeat, IEEE Spectrum, integrated in PyTorch and AutoGluon, and received many low-power computer vision contest awards in flagship AI conferences (CVPR'19, ICCV'19 and NeurIPS'19). Song received Best Paper awards at ICLR'16 and FPGA'17, Amazon Machine Learning Research Award, SONY Faculty Award, Facebook Faculty Award, NVIDIA Academic Partnership Award. Song was named "35 Innovators Under 35" by MIT Technology Review for his contribution on "deep compression" technique that "lets powerful artificial intelligence (AI) programs run more efficiently on low-power mobile devices." Song received the NSF CAREER Award for "efficient algorithms and hardware for accelerated machine learning" and the IEEE "AIs 10 to Watch: The Future of AI" award.

Bio: Song Han is an assistant professor at MIT's EECS. He received his PhD degree from Stanford University. His research focuses on efficient deep learning computing. He proposed "deep compression" technique that can reduce neural network size by an order of magnitude without losing accuracy, and the hardware implementation "efficient inference engine" that first exploited pruning and weight sparsity in deep learning accelerators. His team's work on hardware-aware neural architecture search that bring deep learning to IoT devices was highlighted by MIT News, Wired, Qualcomm News, VentureBeat, IEEE Spectrum, integrated in PyTorch and AutoGluon, and received many low-power computer vision contest awards in flagship AI conferences (CVPR'19, ICCV'19 and NeurIPS'19). Song received Best Paper awards at ICLR'16 and FPGA'17, Amazon Machine Learning Research Award, SONY Faculty Award, Facebook Faculty Award, NVIDIA Academic Partnership Award. Song was named "35 Innovators Under 35" by MIT Technology Review for his contribution on "deep compression" technique that "lets powerful artificial intelligence (AI) programs run more efficiently on low-power mobile devices." Song received the NSF CAREER Award for "efficient algorithms and hardware for accelerated machine learning" and the IEEE "AIs 10 to Watch: The Future of AI" award.Homepage

Optimization of Data Movement for Convolutional Neural Networks

Abstract: Convolutional Neural Networks (CNNs) are central to Deep Learning. The optimization of CNNs has therefore received significant attention. Minimizing data movement is critical to performance optimization. This talk will address the minimization of data movement for CNNs in two scenarios. In the first part of the talk, the optimization of tile loop permutations and tile size selection will be discussed for executing CNNs on multicore CPUs. Most efforts on optimization of tiling for CNNs have either used heuristics or limited search over the huge design space. We show that a comprehensive design space exploration is feasible via analytical modeling. In the second part of the talk, communication minimization for executing CNNs on distributed systems will be discussed.

Bio: Sadayappan is a Professor in the School of Computing at the University of Utah, with a joint appointment at Pacific Northwest National Laboratory. His primary research interests center around performance optimization and compiler/runtime systems for high-performance computing, with a special emphasis on optimization of tensor computations. Sadayappan is an IEEE Fellow.

Bio: Sadayappan is a Professor in the School of Computing at the University of Utah, with a joint appointment at Pacific Northwest National Laboratory. His primary research interests center around performance optimization and compiler/runtime systems for high-performance computing, with a special emphasis on optimization of tensor computations. Sadayappan is an IEEE Fellow.Homepage

Research with AIEngine and MLIR

Abstract: The Xilinx Versal devices include an array of AIEngine Vector-VLIW processor cores suitable for Machine Learning and DSP processing tasks. This talk will provide an overview of AIEngine-based devices and discuss how they are programmed. The talk will also present recent work to build open source tools for these devices based on MLIR to support a wide variety of high-level programming models.

Bio: Stephen Neuendorffer is a Distinguished Engineer in the Xilinx Research Labs working on various aspects of system design for FPGAs. Previously, he was product architect of Xilinx Vivado HLS and co-authored a widely used textbook on HLS design for FPGAs. He received B.S. degrees in Electrical Engineering and Computer Science from the University of Maryland, College Park in 1998. He graduated with University Honors, Departmental Honors in Electrical Engineering, and was named the Outstanding Graduate in the Department of Computer Science. He received the Ph.D. degree from the University of California, Berkeley, in 2003, after being one of the key architects of Ptolemy II.

Bio: Stephen Neuendorffer is a Distinguished Engineer in the Xilinx Research Labs working on various aspects of system design for FPGAs. Previously, he was product architect of Xilinx Vivado HLS and co-authored a widely used textbook on HLS design for FPGAs. He received B.S. degrees in Electrical Engineering and Computer Science from the University of Maryland, College Park in 1998. He graduated with University Honors, Departmental Honors in Electrical Engineering, and was named the Outstanding Graduate in the Department of Computer Science. He received the Ph.D. degree from the University of California, Berkeley, in 2003, after being one of the key architects of Ptolemy II.Homepage

Towards Next-Generation Numerical Methods with Physics-Informed Neural Networks

Abstract: Physics-Informed Neural Networks (PINNs) have recently emerged as a powerful tool for solving scientific computing problems. PINNs can be effectively used for developing surrogate models, completing data assimilation and uncertainty quantification tasks, and solving ill-defined problems, e.g., problems without boundary conditions or a closure equation. An additional application of PINNs is a central topic for scientific computing: the development of numerical solvers of Partial Differential Equations (PDE). While the accuracy and performance of PINNs for solving PDEs directly are still relatively low compared to traditional numerical solvers, combining traditional methods and PINNs opens up the possibility of designing new hybrid numerical methods. This talk introduces how PINNs work, emphasizing the relation between PINN components and main ideas with classical numerical methods, such as Finite Element Methods, Krylov solvers, and quasi-MonteCarlo techniques. I present PINNs' features that make them amenable to use in combination with traditional solvers. I then outline opportunities for developing a new class of numerical methods combining classical and neural network solvers, providing results from initial experiments.

Bio: I was born in Parma, Italy, in 1976. I studied in Torino, Italy, and Urbana-Champaign, Illinois, obtaining an MS and Ph.D. degree in Nuclear Engineering. Since 2012, I work at KTH Royal Institute of Technology, Sweden. I am now an associate professor. My research interest focuses on programming models and emerging computing paradigms.

Homepage

Bio: I was born in Parma, Italy, in 1976. I studied in Torino, Italy, and Urbana-Champaign, Illinois, obtaining an MS and Ph.D. degree in Nuclear Engineering. Since 2012, I work at KTH Royal Institute of Technology, Sweden. I am now an associate professor. My research interest focuses on programming models and emerging computing paradigms.

Homepage

Parallel Sparse Matrix Algorithms for Data Analysis and Machine Learning

Abstract: In addition to the traditional theory and experimental pillars of science, we are witnessing the emergence of three additional pillars, which are simulation, data analysis, and machine learning. All three recent pillars of science rely on computing but in different ways. Matrices, and sparse matrices in particular, play an outsized role in all three computing related pillars of science, which will be the topic of my talk.

I will first highlight some of the emerging use cases of sparse matrices in data analysis and machine learning. These include graph computations, graph representation learning, and computational biology. The rest of my talk will focus on new parallel algorithms for such modern computations on sparse matrices. These include the use of "masking" for filtering out undesired output entries in sparse-times-sparse and dense-times-dense matrix multiplication, new distributed-memory algorithms for sparse matrix times tall-skinny dense matrix multiplication, combinations of these algorithms, and subroutines of them.

Bio: Aydın Buluç is a Staff Scientist and Principal Investigator at the Lawrence Berkeley National Laboratory (LBNL) and an Adjunct Assistant Professor of EECS at UC Berkeley. His research interests include parallel computing, combinatorial scientific computing, high performance graph analysis and machine learning, sparse matrix computations, and computational biology. Previously, he was a Luis W. Alvarez postdoctoral fellow at LBNL and a visiting scientist at the Simons Institute for the Theory of Computing. He received his PhD in Computer Science from the University of California, Santa Barbara in 2010 and his BS in Computer Science and Engineering from Sabanci University, Turkey in 2005. Dr. Buluç is a recipient of the DOE Early Career Award in 2013 and the IEEE TCSC Award for Excellence for Early Career Researchers in 2015. He was a founding associate editor of the ACM Transactions on Parallel Computing.

Bio: Aydın Buluç is a Staff Scientist and Principal Investigator at the Lawrence Berkeley National Laboratory (LBNL) and an Adjunct Assistant Professor of EECS at UC Berkeley. His research interests include parallel computing, combinatorial scientific computing, high performance graph analysis and machine learning, sparse matrix computations, and computational biology. Previously, he was a Luis W. Alvarez postdoctoral fellow at LBNL and a visiting scientist at the Simons Institute for the Theory of Computing. He received his PhD in Computer Science from the University of California, Santa Barbara in 2010 and his BS in Computer Science and Engineering from Sabanci University, Turkey in 2005. Dr. Buluç is a recipient of the DOE Early Career Award in 2013 and the IEEE TCSC Award for Excellence for Early Career Researchers in 2015. He was a founding associate editor of the ACM Transactions on Parallel Computing.Homepage

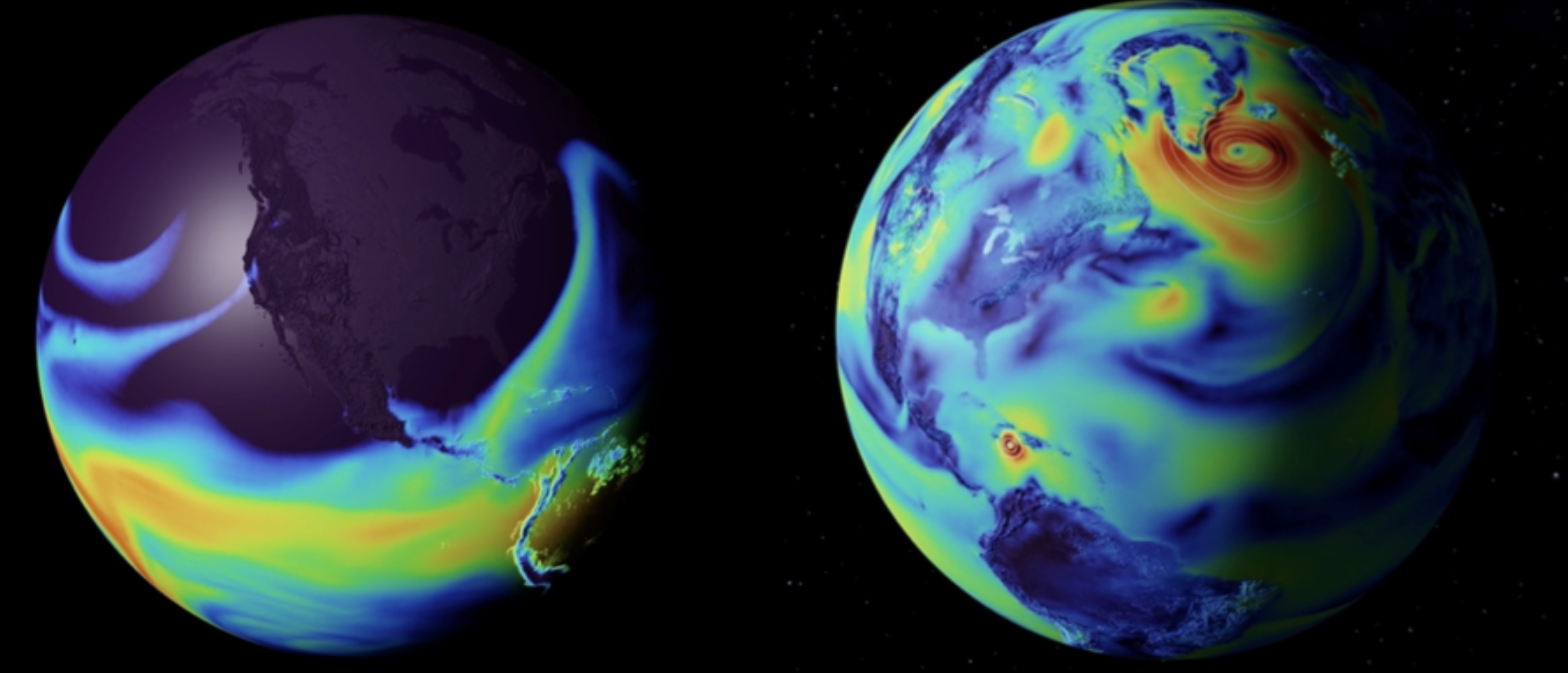

Building Digital Twins of the Earth for NVIDIA's Earth-2 Initiative

Abstract: NVIDIA is committed to helping address climate change. Recently our CEO announced the Earth-2 initiative, which aims to build digital twins of the Earth and a dedicated supercomputer, E-2, to power them. Two central goals of this initiative are to predict the disastrous impacts of climate change well in advance and to help develop strategies to mitigate and adapt to change.

Here we present our work on an AI weather forecast surrogate trained on ECMWF's ERA5 reanalysis dataset. The model, called FourCastNet, employs a patch-based Vision-Transformer with a Fourier Neural Operator mixer. FourCastNet produces short to medium range weather predictions of about two dozen physical fields at 25-km resolution that exceed the quality of all related deep learning-based techniques to date. FourCastNet is capable of accurately forecasting fast timescale variables such as the surface wind speed, precipitation, and atmospheric water vapor with important implications for wind energy resource planning, predicting extreme weather events such as tropical cyclones and atmospheric rivers, as well as extreme precipitation. We compare the forecast skill of FourCastNet with archived operational IFS model forecasts and find that the forecast skill of our purely data-driven model is remarkably close to that of the IFS model for forecast lead times of up to 8 days. Furthermore, it can produce a 10-day forecast in a fraction of a second on a single GPU.

The enormous speed and high accuracy of FourCastNet provides at least three major advantages over traditional forecasts: (i) real-time user interactivity and analysis; (ii) the potential for large forecast ensembles; and (iii) the ability to combine fast surrogates to form new coupled systems. Large ensembles can capture rare but highly impactful extreme weather events and better quantify the uncertainty of such events by providing more accurate statistics. The figure below shows results from FourCastNet in NVIDIA's interactive Omniverse environment. On the left we show atmospheric rivers making landfall in California in Feb, 2017. On the right is a forecast of hurricane Matthew from Sept 2016. By plugging AI surrogates into Omniverse, users can generate, visualize, and explore potential weather outcomes interactively.

Bio: Karthik Kashinath is a senior machine learning scientist and technologist at NVIDIA. He leads various ML initiatives for Earth System Science and CFD applications, including for NVIDIA's Earth-2 initiative aiming to build digital twins of the Earth. Before joining NVIDIA in August 2021, he was at NERSC, Lawrence Berkeley Lab, where he led various climate informatics and machine learning projects at the Big Data Center. He received his Bachelors from the Indian Institute of Technology - Madras, Masters from Stanford University, and PhD from the University of Cambridge. His background is in engineering and applied physics. His research uses the power of machine learning to accelerate scientific discovery in the complex chaotic systems of turbulence, weather, and climate science. A particular focus area is physics-informed machine learning to develop physically consistent, trustworthy, and robust machine learning models. When he is not in front of the computer he is hiking up mountains, swimming in lakes, or cooking up a storm.

Bio: Karthik Kashinath is a senior machine learning scientist and technologist at NVIDIA. He leads various ML initiatives for Earth System Science and CFD applications, including for NVIDIA's Earth-2 initiative aiming to build digital twins of the Earth. Before joining NVIDIA in August 2021, he was at NERSC, Lawrence Berkeley Lab, where he led various climate informatics and machine learning projects at the Big Data Center. He received his Bachelors from the Indian Institute of Technology - Madras, Masters from Stanford University, and PhD from the University of Cambridge. His background is in engineering and applied physics. His research uses the power of machine learning to accelerate scientific discovery in the complex chaotic systems of turbulence, weather, and climate science. A particular focus area is physics-informed machine learning to develop physically consistent, trustworthy, and robust machine learning models. When he is not in front of the computer he is hiking up mountains, swimming in lakes, or cooking up a storm.Language and Compiler Research for Heterogeneous Emerging Computing Systems

Abstract: Programming heterogeneous computing systems is still a daunting task that will become even more challenging with the advent of emerging, non Von-Neumann computer architectures. The so-called golden age of computer architecture thus must be accompanied by a, hopefully, golden age of research in compilers and programming languages. This talk discusses research along two fronts, namely, (1) on domain specific languages (DSLs) to hide complexity from non-expert programmers while passing richer information to compilers, and (2) on understanding the fundamental changes in emerging computing paradigms and their consequences for compilers. Concretely, we will talk about DSLs for physics simulations, compute-in-memory with emerging technologies, and current efforts in unifying intermediate representations with the MLIR compiler framework.

Bio: Jeronimo Castrillon is a professor in the Department of Computer Science at the TU Dresden, where he is also affiliated with the Center for Advancing Electronics Dresden (CfAED). He is the head of the Chair for Compiler Construction, with research focus on methodologies, languages, tools and algorithms for programming complex computing systems. He received the Electronics Engineering degree from the Pontificia Bolivariana University in Colombia in 2004, his masters degree from the ALaRI Institute in Switzerland in 2006 and his Ph.D. degree (Dr.-Ing.) with honors from the RWTH Aachen University in Germany in 2013. In 2014, Prof. Castrillon co-founded Silexica GmbH/Inc, a company that provides programming tools for embedded multicore architectures, now with Xilinx/AMD.

Bio: Jeronimo Castrillon is a professor in the Department of Computer Science at the TU Dresden, where he is also affiliated with the Center for Advancing Electronics Dresden (CfAED). He is the head of the Chair for Compiler Construction, with research focus on methodologies, languages, tools and algorithms for programming complex computing systems. He received the Electronics Engineering degree from the Pontificia Bolivariana University in Colombia in 2004, his masters degree from the ALaRI Institute in Switzerland in 2006 and his Ph.D. degree (Dr.-Ing.) with honors from the RWTH Aachen University in Germany in 2013. In 2014, Prof. Castrillon co-founded Silexica GmbH/Inc, a company that provides programming tools for embedded multicore architectures, now with Xilinx/AMD.Homepage

Challenges of Scaling Deep Learning on HPC Systems

Abstract: Machine learning, and training deep learning in specific, are becoming one of the main workloads running on HPC systems. More so, the scientific computing community is increasingly adopting modern deep learning approaches to their workflows. When HPC practitioners attempt to scale a typical HPC workload, they are mostly challenged by a particular bottleneck. Scaling deep learning, on the other hand, can be challenged by different bottlenecks: memory capacity, communication, I/O, compute etc. In this talk we give an overview of the bottlenecks in scaling deep learning, and highlight efforts in addressing some of those bottlenecks: memory capacity and I/O.

Bio: Mohamed Wahib is a team leader of the "High Performance Artificial Intelligence Systems Research Team" at RIKEN Center for Computational Science (R-CCS), Kobe, Japan. Prior to that he worked as is a senior scientist at AIST/TokyoTech Open Innovation Laboratory, Tokyo, Japan. He received his Ph.D. in Computer Science in 2012 from Hokkaido University, Japan. His research interests revolve around the central topic of high-performance programming systems, in the context of HPC and AI. He is actively working on several projects including high-level frameworks for programming traditional scientific applications, as well as high-performance AI.

Bio: Mohamed Wahib is a team leader of the "High Performance Artificial Intelligence Systems Research Team" at RIKEN Center for Computational Science (R-CCS), Kobe, Japan. Prior to that he worked as is a senior scientist at AIST/TokyoTech Open Innovation Laboratory, Tokyo, Japan. He received his Ph.D. in Computer Science in 2012 from Hokkaido University, Japan. His research interests revolve around the central topic of high-performance programming systems, in the context of HPC and AI. He is actively working on several projects including high-level frameworks for programming traditional scientific applications, as well as high-performance AI.Homepage

Co-Optimization of Computation and Data Layout to Optimize Data Movement

Abstract: Code generation and optimization for the diversity of current and future architectures must focus on reducing data movement to achieve high performance. How data is laid out in memory, and representations that compress data (e.g., reduced floating point precision) have a profound impact on data movement. Moreover, the cost of data movement in a program is architecture-specific, and consequently, optimizing data layout and data representation must be performed by a compiler once the target architecture is known. With this context in mind, this talk will provide examples of data layout and data representation optimizations, and call for integrating these data properties into code generation and optimization systems.